IUP ISI/MediaWiki-Silk/Architecture: Difference between revisions

| Line 57: | Line 57: | ||

'''Objects life cycle''' | '''Objects life cycle''' | ||

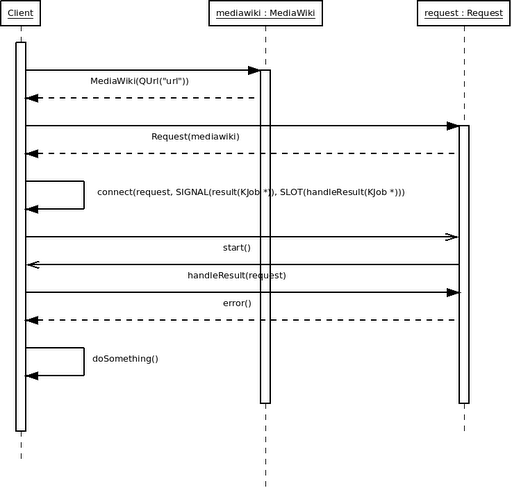

The following schema presents an object life cycle. As we can see, the user instantiate a mediawiki before requesting and destroy it after all requests. | |||

[[File:MediaWiki_Silk_Sequence_uml.png]] | [[File:MediaWiki_Silk_Sequence_uml.png]] | ||

Revision as of 18:07, 4 November 2010

Introduction

This document presents the architecture of LibMediaWiki. Is is a library based on Qt/KDE framework wich interface Mediawiki to provides mediawiki fonction for developpers.

Purpose

This document provides a high level overview of the evolving technical architecture for the Mediawiki Library. It outline the technologies and the technical choice that Librairy developper will use to develop it.

References

- QT Framework is a cross platform librairy http://doc.qt.nokia.com/4.7/

- KDE is an open source desktop and librairy based on QT http://kde.org/

- MediaWiki is a free software wiki package written in PHP, originally for use on Wikipedia http://www.mediawiki.org/wiki/MediaWiki

Choice architecture

Synchronous or asynchronous?

Two choices are possible to develop the request management in the library: synchronous and asynchronous.

Synchronous way will lead us to use normal function call. But, since we expects the end of the function, the interface can freeze especially if there is a lot of latency. Moreover, this way will allow to not use signals.

Asynchronous way don’t block the interface because the application doesn’t wait the result of each request to execute the program. This method will lead us to use signals to access to the result.

On the one hand, use synchronous way will lead to use a QEventloop to avoid the freezing and a QNetworkAccessManager in synchronous mode. Otherwise, this way can involve the use of different threads in the application.

On the other hand, use asynchronous way will lead us to use signals and a QNetworkAccessManager in asynchronous mode.

To conclude, we choose the asynchronous one because it solves the interface problem. Moreover, this method is the one used in the library architecture at the begining. But the asynchronous way give the problem of access to the result.

Get the request’s result

We can use three methods to get the result. First, we can develop accessors on each request classes. This way can cause risks of a call before the signal. This method use a synchronous call. A solution can be to specify this trouble in the documentation of the API.

The second method is to use a signal having the result as parameter. Use of signals, can meet the design pattern command. Indeed this pattern means that the query calls the action to perform, a slot, in the client. Also, this way respect the asynchronous semantic but the result is pass by value. The pass by value will be a trouble because it is something heavy. A possible problem will be that the copy is useless. A solution could be using pass by pointer, smart pointer or reference.

The last method consists in using a signal, like the previous way, which the result as parameter. This method respect the asynchronous semantic but the result is pass by reference. The problem is that the visibility of errors by the slots is lesser.

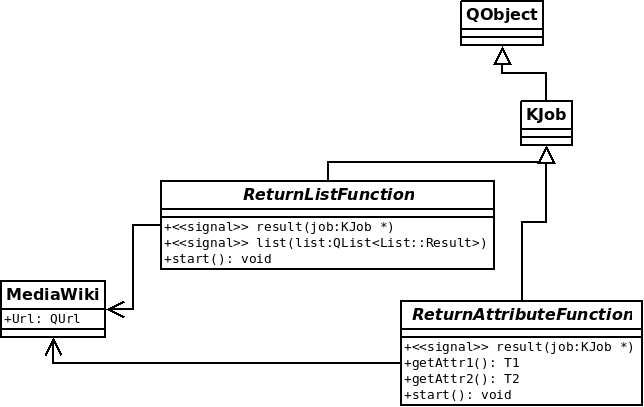

To conclude, we choose to use two solutions. For the “attribute” classes, a good solution will be to use the first method. As for the “list” classes, the solution will be to use the second solution.

Develop Unique class vs multiple classes?

Two choices are possible to create the library: develop a unique class or multiple classes.

A unique class involves that all requests will be within. Another point is that there will be no memory management because only the destruction of the MediaWiki class would be sufficient to. Nevertheless, the presence of all requests hinders the code’s readability. Finally, use this way requires the application to handle a single request at a time.

Multiple classes will lead us to put one request in one class. This kind of development causes

an attentive memory management. On the other hand, this way improves the readability and especially allows to initiate some requests at a time.

Use a unique class means that all methods will be called in a normal way, for example for calling all pages we must do wiki->allpagesRequest(). We could find a way to allow the application to handle some requests but it will be quite difficult and take a lot of time. To improve the readability, we could put many comments in the code.

Use multiple classes leads us to pass a reference to a MediaWiki instance in the constructor of each classes. Finally, we have to define the objects life cycle therefore we will lead to manage the memory.

To conclude, we choose to use some classes because we think that calling many requests is important. Moreover, have a good quality code is a goal. This way is different from the development done at the beginning. However, this method gives the problem of the objects life cycle and the memory management.

Objects life cycle

The following schema presents an object life cycle. As we can see, the user instantiate a mediawiki before requesting and destroy it after all requests.

Memory management

About the memory management, our classes inherit from KJob which inherit from QObject so we use the Qt object mechanism to manage the memory. Its inheritance of QObject involves concepts like the parent. An advantage of this class is, if we use parent system, we don’t have to look after the deleting because it will be deleted by itself.

Requirements

Namespace

To avoid name conflicts with others libraries, we propose to define a namespace like silk::. mediawiki:: was a possibility but there will be a redundancy (mediawiki::MediaWiki).

Binary compatibility

For ensure the binary compatibility, we’ll use only a pointer to MediaWikiPrivate, which will contain attributes. In this case, modify the attributes doesn’t fail a class which uses MediaWiki.

Architecture representation

The following schema presents an abstract representation of the library. Also we can see that the library inherits KJob class which inherits QObjects itself.

Quality

In this part, we will present two pieces of the quality. First, we are going to define the tests architecture. Secondly we will describe the coding style.

Test

Test types

We decided to do 2 types of tests, firstly we use unit tests for each classes to ensure that code meets its design and behaves as intended.

Secondly, we decided to make code coverage test, it describes the degree to which the source code of a program has been tested. It is a form of testing that inspects the code directly and is therefore a form of white box testing. We add code coverage because it’s easily set and it increases code visibility and quality.

Folder organisation

We will create a test folder in each project folder. For example, in the libmediawiki folder we will create a test folder, named “test” (“mediawiki/libmediawiki/test”). For each classes, a test class file will be created.

Tools

We will use CTest to execute tests, it’s a cross-platform, open-source testing system distributed with CMake. CTest can peform several operations on the source code, that include configure, build, perform set of predefined runtime tests. It also includes several advanced tests such as coverage and memory checking.

We have decided to use CTest to work with the same distribution as CMake.

The test ouput results will be displayed on a console or with XML and HTML.

Unit test:

In the unit test code, we have decided to use QTest because we wanted as much as possible to use the Qt library to avoid dependency with other library.

Code coverage test:

For the code coverage we will use GCov, it’s a code coverage program, it will allow us to easily add new tests and to automate tests execution.

Local test server

To run tests we need a server, we decided to implement a local server (fake server). In opposition to a web server, here are local server advantages :

- Environment independence, we not depend on a web server then there is not Internet problems because latency can increase the test execution period and make our tests unusable. Moreover our local server don’t need any maintenance operation.

- Keep control on data, tester can keep control on data and avoid that data could be forged by any other tester.

Coding style

The project is based on KDE so we decide to follow the kdelibs coding style. For this, we will use the Artistic Style (astyle), it is an automatic code formatting tool. For more information on our coding style, you can click here.