GSoc/2023/StatusReports/QuocHungTran: Difference between revisions

Quochungtran (talk | contribs) |

Quochungtran (talk | contribs) |

||

| Line 404: | Line 404: | ||

* Using API from ItemInfo object to populate tags automatically in database (create new TagAlbum accordingly). | * Using API from ItemInfo object to populate tags automatically in database (create new TagAlbum accordingly). | ||

* Etude more lightweight model. | * Etude more lightweight model. | ||

'''Main Commits''' : | |||

[https://invent.kde.org/graphics/digikam/-/merge_requests/221/diffs?commit_id=606bcd54784983c8a2fb1ad1e4c122575770a527 606bcd54] | |||

====== Architecture of Maintenance Tool ====== | ====== Architecture of Maintenance Tool ====== | ||

Revision as of 08:35, 10 August 2023

Add Automatic Tags Assignment Tools and Improve Face Recognition Engine for digiKam

digiKam is an advanced open-source digital photo management application that runs on Linux, Windows, and macOS. The application provides a comprehensive set of tools for importing, managing, editing, and sharing photos and raw files.

The goal of this project is to develop a deep learning model that can recognize various categories of objects, scenes, and events in digital photos, and generate corresponding keywords that can be stored in Digikam's database and assigned to each photo automatically. The model should be able to recognize objects such as animals, plants, and vehicles, scenes such as beaches, mountains, and cities,... The model should also be able to handle photos taken in various lighting conditions and from different angles.

Mentors : Gilles Caulier, Maik Qualmann, Thanh Trung Dinh

Project Proposal

Automatic Tags Assignment Tools and Improve Face Recognition Engine for digiKam Proposal

GitLab development branch

Contacts

Email: [email protected]

Github: quochungtran

Invent KDE: quochungtran

LinkedIn: https://www.linkedin.com/in/tran-quoc-hung-6362821b3/

Project goals

Links to Blogs and other writing

Main merge request

KDE repository for object detection and face recognition researching

Issue tracker

My blog for GSoC

My entire blog :

(Week 1 - 2)

In this phase, I focus mainly on offline analysis, this analysis aims to create a Deep learning model pipeline for object detection problem.

This is my repo Github that I working on and following the offline analysis:

https://github.com/quochungtran/Digikam_benchmark_object_detection_algo/blob/master/pycocoDemo.ipynb

DONE

- Constructed data sets (training dataset, validation dataset, and testing dataset) for common objects such as person, bicycle, and car.

- Reprocessed the data and studied the construction of the COCO dataset, which was used for the training dataset and validation dataset.

- Researched and created a model pipeline for all versions of YOLO in Python.

TODO

- Evaluate the performance of various YOLO versions (v3, v4, v5) by considering evaluation metrics such as precision, recall, F1 score, and inference time on the testing dataset.

- Start implementing the C++ version of the YOLO algorithm in the Digikam codebase.

Construct of COCO dataset format

The Common Object in Context (COCO) is widely recognized as one of the most popular and extensively labeled large-scale image datasets available for public use. It encompasses a diverse range of objects that we encounter in our daily lives, featuring annotations for over 1.5 million object instances across 80 categories. To explore the COCO dataset, you can visit the dedicated dataset section on SuperAnnotate's platform.

The data in the COCO dataset is stored in a JSON file, which is organized into sections such as info, licenses, categories, images, and annotations. To acquire the COCO dataset, I specifically utilized the "instances_train2017.json" and "instances_val2017.json" files, which are readily available for download.

"info": {

"year": "2021",

"version": "1.0",

"description": "Exported from FiftyOne",

"contributor": "Voxel51",

"url": "https://fiftyone.ai",

"date_created": "2021-01-19T09:48:27"

},

"licenses": [

{

"url": "http://creativecommons.org/licenses/by-nc-sa/2.0/",

"id": 1,

"name": "Attribution-NonCommercial-ShareAlike License"

},

...

],

"categories": [

...

{

"id": 2,

"name": "cat",

"supercategory": "animal"

},

...

],

"images": [

{

"id": 0,

"license": 1,

"file_name": "<filename0>.<ext>",

"height": 480,

"width": 640,

"date_captured": null

},

...

],

"annotations": [

{

"id": 0,

"image_id": 0,

"category_id": 2,

"bbox": [260, 177, 231, 199],

"segmentation": [...],

"area": 45969,

"iscrowd": 0

},

...

]

To extract the necessary information from the COCO dataset, I utilized the COCO API, which greatly assists in loading, parsing, and visualizing annotations within the COCO format. This API provides support for multiple annotation formats.

The following table provides an overview of some useful COCO API functions:

| APIs | Description |

|---|---|

| getImgIdsGet | Get img ids that satisfy given filter conditions. |

| getCatIdsGet | Get cat ids that satisfy given filter condition |

| getAnnIdsGet | Get ann ids that satisfy given filter conditions. |

FInitially, my focus was on benchmarking the model using common object categories such as person, bicycle, and car. For these specific subcategories, there are currently 1,101 training images available, while there are 45 validation images.

For the testing dataset, I decided to manually label it by utilizing a custom dataset provided by the user. This approach allows for a real-world use case scenario.

Additionally, here is a table listing some of the existing pre-trained labels in the YOLO format:

| Categories | Sub Categories |

|---|---|

| People and animals | person, cat, dog, horse, elephant, bear, etc. |

| Vehicles | bicycle, car, motorcycle, airplane, bus, train, truck, boat, etc. |

| Traffic-related objects | traffic light, stop sign, parking meter, etc. |

| Furniture | chair, couch, bed, dining table, etc. |

| Food and drink | banana, apple, sandwich, pizza, wine glass, cup, etc. |

| Sports equipment | skis, snowboard, tennis racket, sports ball, etc. |

| Electronic devices | TV, laptop, cell phone, remote, etc. |

| Household items | umbrella, backpack, handbag, suitcase, etc. |

| Kitchenware | fork, knife, spoon, bowl, etc. |

| Plants and decoration | potted plant, vase, etc. |

Below, you can find some samples from the training dataset. Each image contains multiple object annotations in the form of bounding boxes, denoted by their top-left corner coordinates (x, y) and their respective width and height (w, h).

YOLO model pipeline

Load the version YOLO network

To begin the YOLO detection process, we need to load the YOLO network. YOLO, which stands for You Only Look Once, is an incredibly fast algorithm for multi-object detection that leverages a convolutional neural network (CNN) to identify and localize objects.

In Python, I have created a pipeline for YOLO detection. To begin, we must download the pre-trained YOLO weight file and the YOLO configuration file. For this pipeline, I have chosen to use version v3 of YOLO.

The YOLO neural network consists of 254 elements, including convolutional layers (conv), rectified linear units (relu), and other components.

To load the YOLO model in Python, the following code snippet is used:

net = cv.dnn.readNetFromDarknet('yolov3.cfg', 'yolov3.weights')

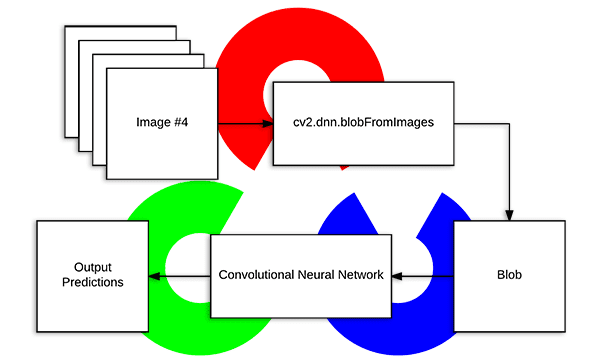

Create a blob

The input to the YOLO network is a blob object. To transform the image into a blob, we use the function cv.dnn.blobFromImage(img, scale, size, mean). This step is crucial as it involves preprocessing the data to ensure accurate predictions from the deep neural network.

The blobFromImage function performs the following operations:

- Resizing: It resizes the input image to a specific size required by the model. Deep learning models often have fixed input sizes, and the blobFromImage function ensures that the image is resized to match these requirements.

- Normalizing image: Dividing the image by 255 ensures that the pixel values are scaled between 0 and 1, can help ensure that gradients during the backpropagation process are within a reasonable range. This can aid in more stable and efficient convergence during training.

- Mean Subtraction: It subtracts the mean values from the image. Mean subtraction helps in normalizing the pixel values and removing the average color intensity. The mean values used for subtraction are usually pre-defined based on the dataset used to train the model.

- Channel Swapping: It reorders the color channels of the image. Deep learning models often expect images in a specific channel order, such as RGB (Red, Green, Blue). If the input image has a different channel order, the blobFromImage function swaps the channels accordingly.

In this case, the scale factor is set to 1/255, which ensures that the lightness of the image remains the same as the original.

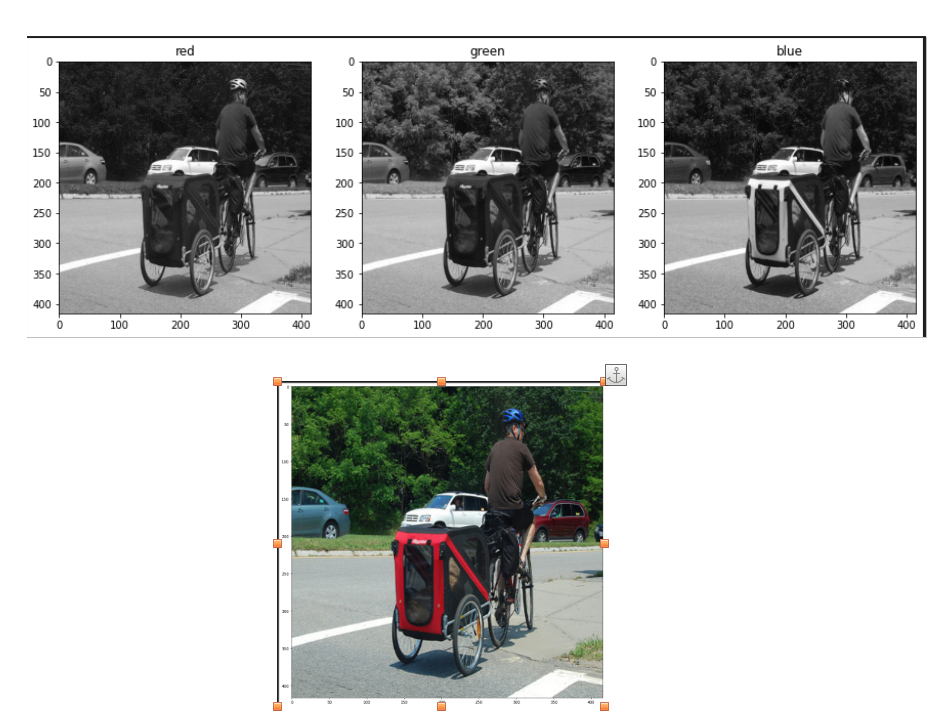

Once the image is transformed into a blob, it becomes a 4D NumPy array object with dimensions (images, channels, width, height) after resizing to (416, 416). The resulting blob consists of three channels: red, blue, and green. This can be visualized in the image below:

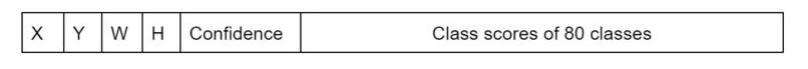

The blob object is then passed as input to the network, and the forward propagation is performed to retrieve all the layer names from the network and determine the output layers. The outputs object are vectors of length 85.

- 4x the predicted bounding box (centerx, centery, width, height)

- 1x box confidence: refers to the confidence score or probability assigned to the predicted bounding box. It represents the model's estimation of how confident it is that the bounding box accurately encloses an object in the image.

- 80x class confidence : these scores indicate the probabilities or confidences that an object detected in the image belongs to a particular class among 80 classes

Post processing

After obtaining the bounding boxes and their corresponding confidences from the output of an object detection model, it is common to apply a technique called Non-Maximum Suppression (NMS) to select the best bounding boxes.

NMS is a post-processing step that helps eliminate redundant or overlapping bounding box detections. Its purpose is to select the most accurate and representative bounding boxes while removing duplicates or highly overlapping detections.

In OpenCV, the cv.dnn.NMSBoxes() function is a convenient utility function that performs NMS. It accepts several parameters to fine-tune its behavior:

- boxes: This parameter represents the bounding boxes detected in the image. Each bounding box is typically represented as a list of four values (x, y, width, height) or as a tuple.

- confidences: This parameter contains the confidence scores associated with each bounding box. The confidence scores indicate the likelihood that the corresponding bounding box contains an object of interest.

- score_threshold: This parameter specifies the minimum confidence score threshold for considering a bounding box during NMS. Any bounding box with a confidence score below this threshold will be disregarded.

- nms_threshold: This parameter determines the overlap threshold for suppressing redundant bounding boxes. If the overlap between two bounding boxes exceeds this threshold, the one with the lower confidence score is suppressed.

The cv.dnn.NMSBoxes() function returns the indices of the selected bounding boxes that passed the NMS process. These indices correspond to the original list of bounding boxes and confidences, allowing you to access the selected boxes and their associated information.

Brainstorming: Multiple Options for Object Tagging in DigiKam

In this project, my goal is to empower users with the ability to choose specific options from a predefined set of 80 classes for automatic tagging in input images within DigiKam. We achieve this by leveraging the object detection capabilities of YOLO.

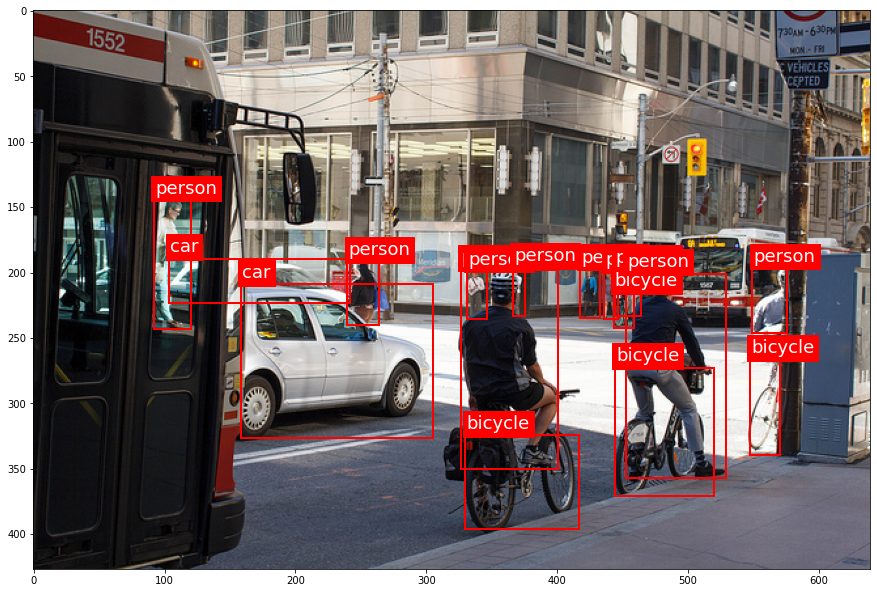

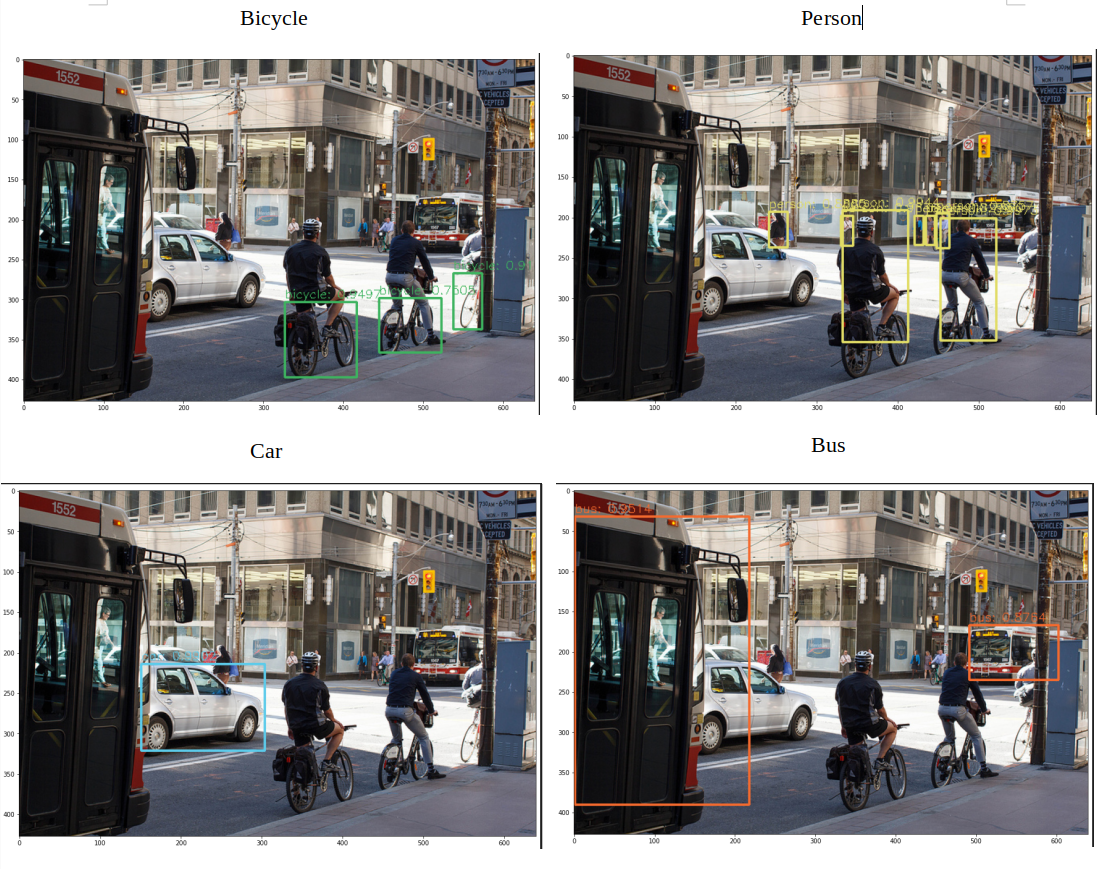

Let's take a look at an example from my research notebook, this picture include some objects like 'person', 'bike', 'car', 'bus':

After applying the YOLO model to the image, we obtain the following visualization with separated option from user:

Each image in the visualization represents a detected object, accompanied by a bounding box that highlights its location. This provides users with a clear understanding of the detected objects in the image.

In DigiKam, users have the freedom to select specific objects from the detected classes and assign appropriate tags to them. This flexibility allows for a personalized approach to organizing and categorizing images based on individual preferences and requirements.

(Week 3 - 4)

DONE

- Researched and created the Deeplearning pipeline to bencmark the different yolo version in term of the accuracy and inference time, decided which one should be intergrated into digiKam.

- Exported ONNX file for yoloVersion for the inference.

- Documentation

TODO

- Develop a mock widget in C++

YOLOv3 and YOLOv4 Performance

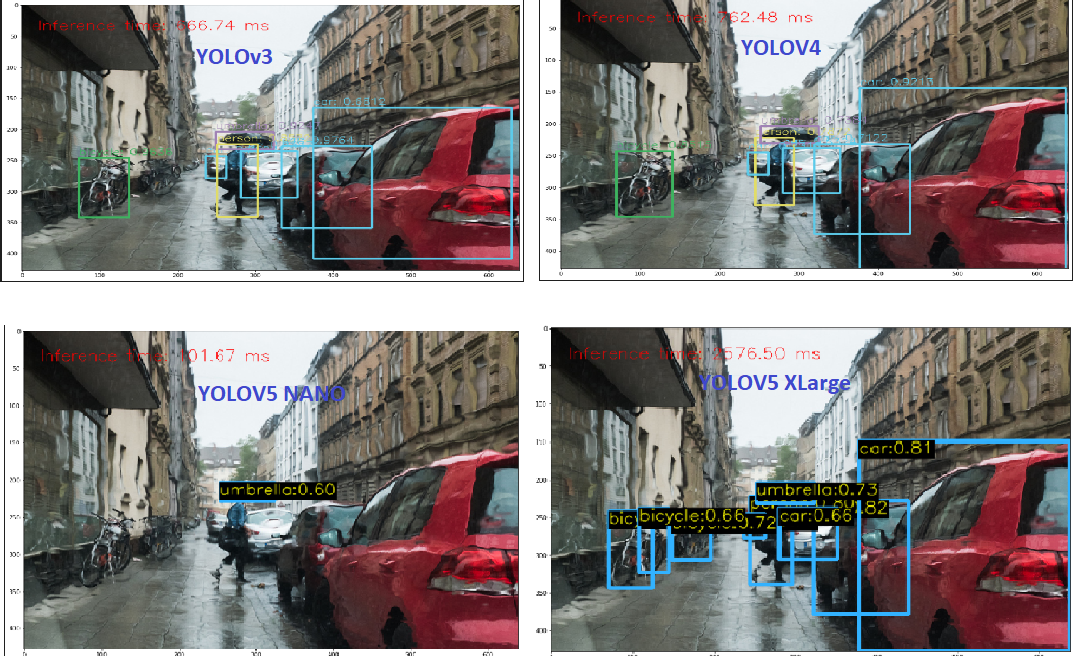

Currently, In face engine, digiKam is using Yolov3 and SSD for recognition, the accuracy is better considering Yolov3. As a rough estimate, on a modern CPU, YOLOv3 can achieve around 1-2 FPS with an inference time of 500-1000 milliseconds per image.

About object detection project, I actually benchmark this term for yolov3 and yolov4 in my local machine (cpu amd ryzen 5 5600h with radeon graphics with 6 cores), for running through out 45 validation images dataset, in order to calculate the average inferebnce time and FPS (frames per second) of the YOLOv3 and v4. And I can see the inference time was the same as the estimate.

Provide the like of notebook here:

| YOLO version | Average Inference Times | Average FPS |

|---|---|---|

| YOLOV3 | 673.43 ms | 1.5 frames/s |

| YOLOV4 | 772 ms | 1.3 frames/s |

It appears that YOLOv3 performs slightly better than YOLOv4 in terms of both inference time and FPS. YOLOv3 has a lower average inference time and a higher average FPS, indicating faster processing and better real-time performance compared to YOLOv4 on the tested CPU. YOLOv4 is generally considered to be a more advanced and complex model compared to YOLOv3. It introduces several architectural changes and additional components to improve accuracy and detection capabilities. However, these advancements may come at the cost of increased computational requirements, resulting in longer inference times and lower FPS.

Selection model (Experiences with YOLOv5) --- Convert YOLO model to ONNX format

In order to address this issue, several questions should be taken into consideration when selecting a model:

- Which YOLO model is the fastest on the CPU?

- Which YOLO model is the most accurate?

Load YOLOV5 model using onnx:

Since YOLOv5 is implemented in PyTorch rather than the Darknet framework, we cannot directly use the cv2.dnn.readNetFromDarknet() function in OpenCV to load the YOLOv5 model. Currently in face detection in digiKam, we used the readNetFromDarknet() function which is specifically used to load models that are trained using the Darknet framework.

Instead, we need to convert the YOLOv5 model to the ONNX format and then use the cv2.dnn.readNetFromONNX() function in OpenCV to load the ONNX model. In fact, ONNX stands for Open Neural Network Exchange. It is an open-source format designed for interoperability between different deep learning frameworks. ONNX allows to export trained models from one framework and import them into another, making it easier to deploy and use models across various platforms and frameworks. Luckyly, OpenCV provides a familiar and easy-to-use API for loading, running, and post-processing ONNX models. It allows me to integrate ONNX models seamlessly into our computer vision pipelines.

cv2.dnn.readNetFromONNX('yolov5nano.onnx')

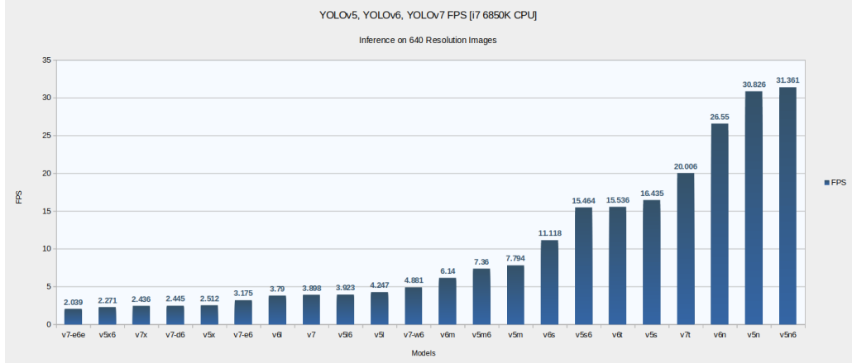

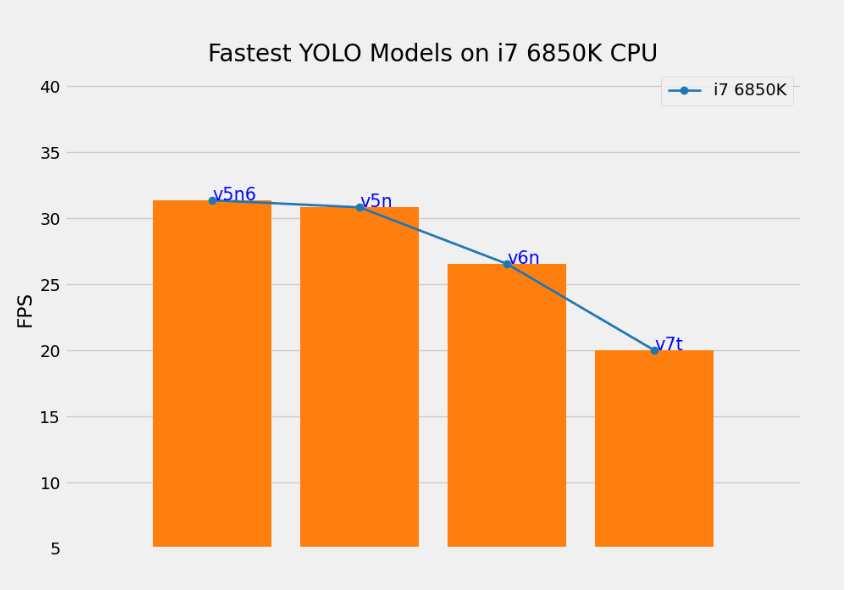

Although the numbers vary depending on the CPU architecture, we can find a similar trend for the speed. The smaller the model, the faster it is on the CPU. Considering the following research in this aricle we find that YOLOv5 Nano and Nano P6 models are the fastest results on 640 resolution images and 1280 resolution image.

However in term of accuracy, we need to consider the larger models from each family (v5x, v6l, v7x) perform quite well. Because, I intend to use COCO classes for tag assigments, so then using one of the mentioned pre-trained models will give you very accurate predictions. Looking at the figure below from the article

| YOLO version | Average Inference Times | Average FPS |

|---|---|---|

| YOLOV3 | 673.43 ms | 1.5 frames/s |

| YOLOV4 | 772.6 ms | 1.3 frames/s |

| YOLOV5 nano | 80 ms | 14 frames/s |

| YOLOV5 Xlarge | 1442 ms | 1.3 frames/s |

Some remarks I would like to discuss:

YOLOv5 Nano: YOLOv5 Nano demonstrates significantly faster inference times and higher FPS compared to YOLOv3 and YOLOv4. It has an average inference time of 80 ms, resulting in 14 frames per second. YOLOv5 Nano's lightweight design makes it well-suited for low-power or resource-constrained devices where real-time object detection is desired.

YOLOv5 XLarge has a relatively longer inference time of 1442 ms, resulting in 1.3 frames per second. While it offers potentially higher accuracy due to its larger model size, it sacrifices real-time performance. YOLOv5 XLarge might be more suitable for scenarios where accuracy is prioritized over speed.

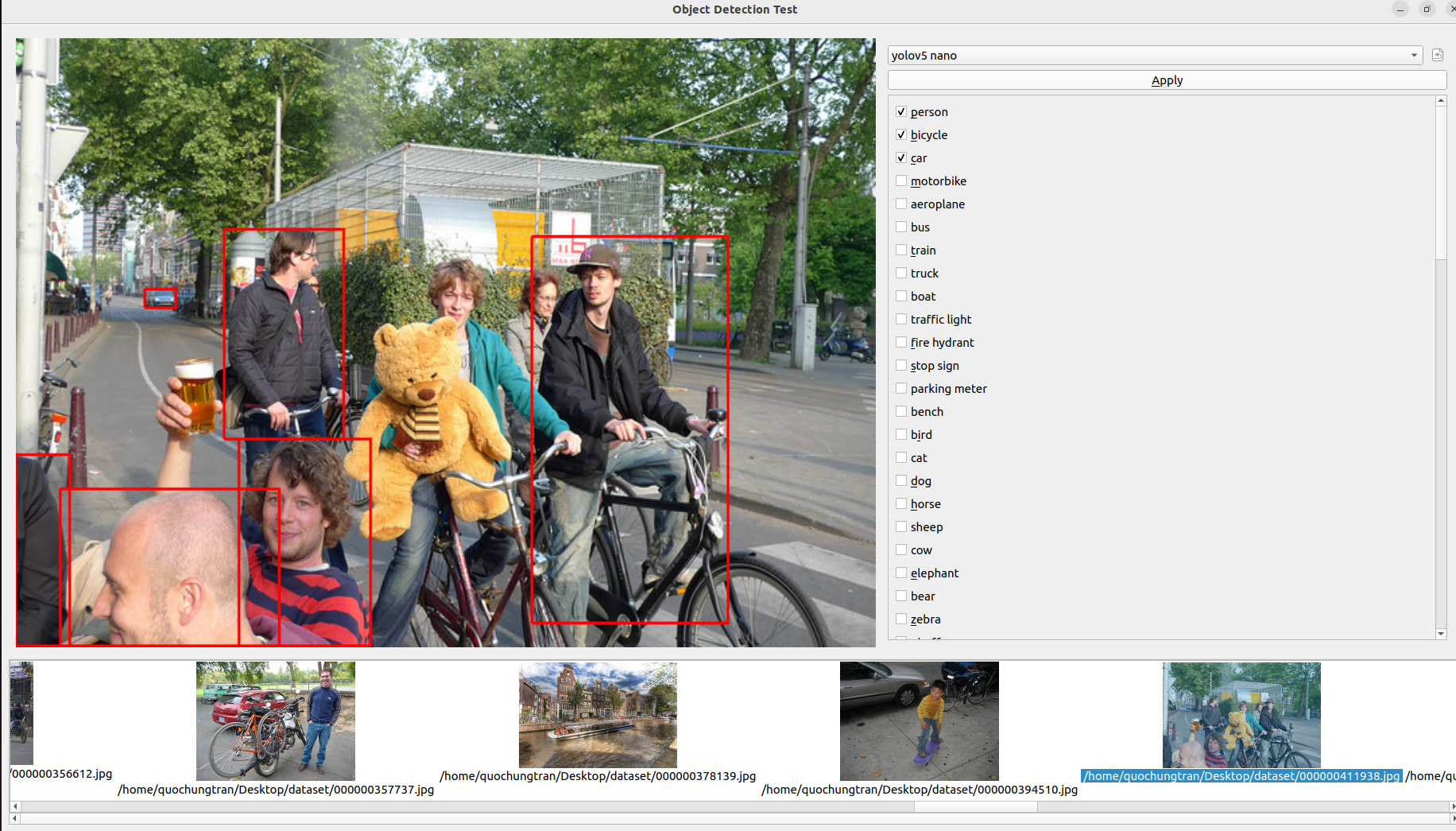

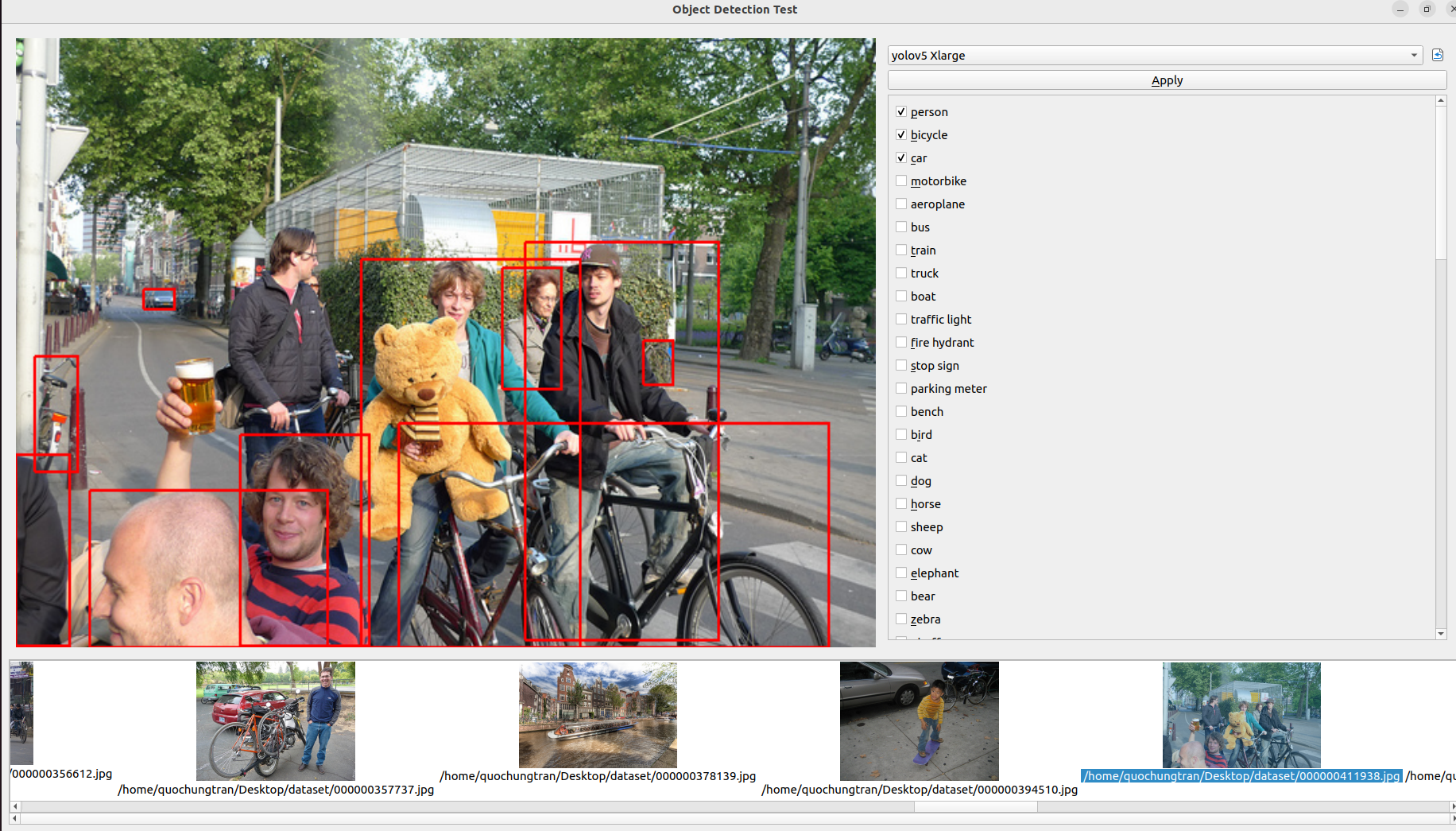

Yolo familly detect quite good mostly in many kind of images. The following results can release the good output with 80 pretrained-objects. Regarding the observation about YOLOv5 Nano performing well in blurred or complex images, it's worth noting that the model's performance can vary based on factors like dataset, training methodology, and specific use cases. YOLOv5 models, in general, offer a trade-off between accuracy and speed, with larger models providing higher accuracy at the cost of slower inference times . The image below shows a comparison of object detection with these familly of YOLOversion.

Precision measures the accuracy of positive predictions, while recall measures the completeness of positive predictions. High precision and high recall are desirable. In the case of the YOLO algorithm, it tends to predict correct examples with a relatively higher precision score. However, it may not detect all the desired objects, impacting its recall. When considering the different YOLO models, YOLO X Large stands out by achieving very high precision and recall scores. This model outperforms the others and is capable of detecting even smaller objects accurately.

In conclusion, next pharse:

- I plan to develop a testing mock GUI in C++ to benchmark various algorithms before integrating them into digiKam tools. This GUI will specifically focus on benchmarking the latest version of YOLO for object detection. It will provide support for benchmarking multiple versions of object detection algorithms, displaying frames per second (FPS), and measuring inference times.

- Additionally, it is important to consider hardware acceleration in this use case. OpenCV offers optimizations for hardware acceleration, such as leveraging specialized libraries like OpenVINO or utilizing GPU acceleration. These optimizations can significantly enhance the performance of running ONNX models on compatible hardware.

(Week 5 - 6)

DONE :

- Create Integration testing tools to benchmark dnn model detector.

- Documentation.

Main merger request:

https://invent.kde.org/graphics/digikam/-/merge_requests/221

TODO :

- Etude the code base to integrate auto-tags features into yhe Maintenance or BQM tools.

- Focus on applying the detector in batch

- Documentation.

After several week of researchs, I decided to choose 2 model YOLOv5nano and YOLOv5 XLarge to deploy into digiKam. First of all, I create an integration testing tools. Integration testing plays a crucial role in ensuring the seamless functioning of software systems. As part of my ongoing efforts to optimize testing processes, I am excited to announce my plan to create a Merge Request (MR) for integrating benchmarking tools with the popular YOLO (You Only Look Once) version. This MR aims to enhance the capabilities of the testing suite by introducing two essential components: a user-friendly control panel and an intuitive image area for displaying detected objects. In this blog post, I will outline the features of these tools and highlight their superior performance compared to existing Python-based solutions.

The integration testing tools I'm developing will consist of two primary elements:

- Control Panel with Model Selection and Predefined Classes:

The control panel will serve as the central interface for the benchmarking process. It will provide users with a range of options to select the desired YOLO model for evaluation. Additionally, the control panel will feature a comprehensive library of 80 predefined classes, enabling efficient testing across a wide range of object detection scenarios, selecting by users.

- Image Area for Displaying Detected Objects:

To provide a visually engaging and informative experience, the tools will incorporate an image area that displays all the detected objects. This intuitive interface allows users to observe the precision and accuracy of the YOLO models in action. By visualizing the results, testers can gain valuable insights into the performance and reliability of the integration tests.

Unparalleled Performance:

One of the primary reasons for integrating these benchmarking tools into your testing suite is their exceptional performance. In terms of inference time per image, the tools outperform existing Python-based alternatives. Let's take a look at the average performance of the two YOLO models when running on a CPU:

- YOLOv5 Nano: With an impressive average inference time of just 57 ms (compared to 80ms in Python), the YOLOv5 Nano model offers exceptional speed and efficiency. It enables you to swiftly evaluate the integration of smaller-scale object detection scenarios.

- YOLOv5 XLarge: For more complex object detection requirements, the YOLOv5 XLarge model showcases remarkable capabilities. Despite the additional complexity, it still maintains an average inference time of 724 milliseconds (compared to 1400ms in Python), ensuring reliable and precise testing for larger-scale scenarios.

(Week 7 - 8)

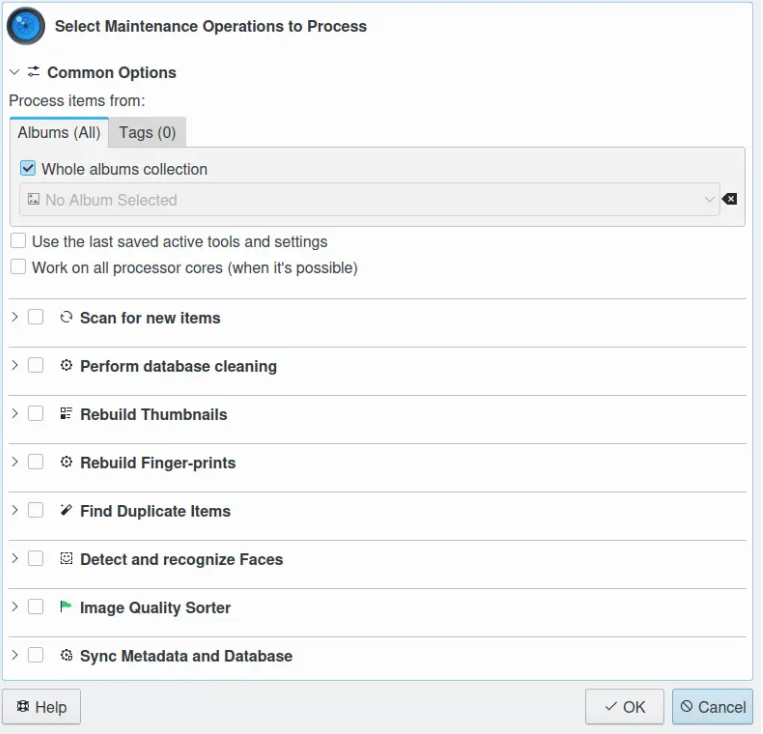

In this week, I tried to study how to integrate the feature into Maintenance and BQM tools, show let me introduce some short information about these tools.

Look how Quality sorting, the tool (eg the Maintenance or BQM tools) will not ask to the user if the estimated values (Pick Labels) are fine or no while the analysis. There are settings to route the values at the right place in the database, all is done automatically.

Following Gilles's propositions:

- Adding a new maintenance tool in the chained process (Maintenance are not yet a plugin).

Maintenance is a tool running processes in the background to maintain image collections and database contents. The list of tools is presented in a sequential order and cannot be changed. Only the tools to activate or deactivate during a maintenance session can be selected. The sequence of tools is relevant of the order to populate the information in database on the first time, and the way to use these information in a second time. So the mission here is add the new checkbox widget for auto tags process int the existing maintenance dialog (showed in the image below):

One of the tools available from the list are listed below using deep learning is: Detect and Recognize Faces: perform automatic faces management updates and Image Quality Sorter: perform an automatic scan of items to sort items by quality and apply Pick Labels in database. This is mostly the core engine and the GUI described in this documentation :

https://docs.digikam.org/en/batch_queue.html

- Adding a new BQM tool (in this case it's a plugin).

DigiKam features a batch queue manager in a separate window to easily process in batch items, aka filtering, converting, transforming, etc. It works with all supported image formats including RAW files.

- Adding a new menu entry to process item from preview mode (typically to parse one item current visualized by end-user).

(Week 9 - 10)

DONE :

- Developed a checkbox widget for autotag assignments within the Digikam Maintenance dialog.

- Established a connection between the frontend widget and backend to enable tag generation during the Maintenance process in Digikam.

- Documentation.

TODO :

- Using API from ItemInfo object to populate tags automatically in database (create new TagAlbum accordingly).

- Etude more lightweight model.

Main Commits :

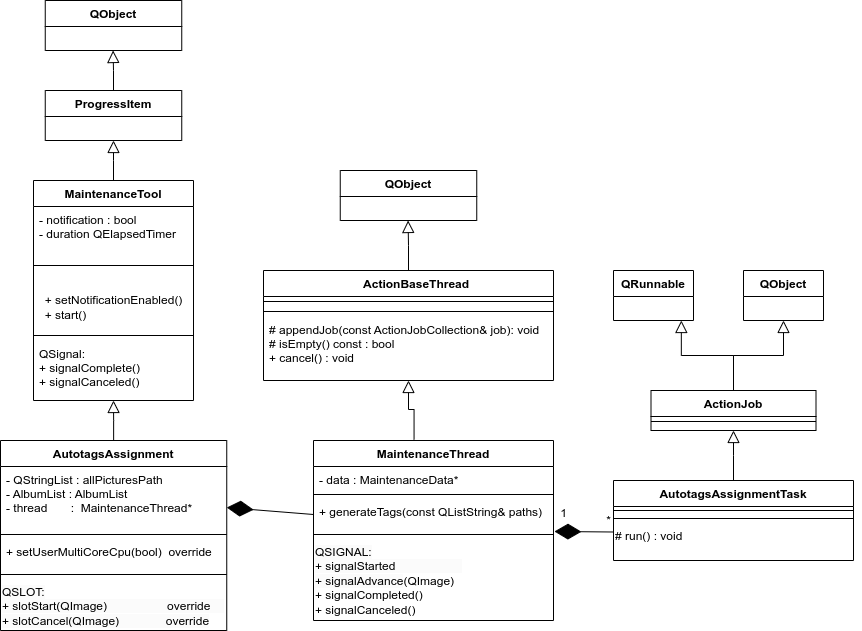

Architecture of Maintenance Tool

This process will use an internal Maintenance multi-thread for generate tags automatically from input images. Classes in this part will be implemented based on existing objects to manage and chain threads in digikam. The idea is inspired by using QThreadPool and QRunnable. Existing threads can be reused for new tasks, and QThreadPool is a collection of reusable QThreads. The part MaintenanceThread manages the functioning of AutotagsAssignmentTask. Concretely, MaintenanceThread manages the instantiations of AutotagsAssignmentTask. Each AutotagsAssignmentTask will initialize one AutoTags engine object to manage one URL of an image.

The purpose is to allow each process to run in parallel and stop properly. The run() method of object AutotagsAssignmentTask is a virtual method that will be reimplemented to facilitate advanced thread management. Here is the architecture of this part:

MaintenanceThread is encapsulated by AutotagsAssignment which is a MaintenanceTool widget object generatively used around the code base, applied for another Maintenance processes.

Main back-end AutoTagging feature

As per my mentors' suggestion, the utilization of item keywords (tags) usually suffices to populate information derived from AI analysis, aimed at identifying forms, objects, locations, flora, fauna, and more. The API for this purpose already exists within the existing database, facilitating the integration of such information. Therefore, in this context, the predicted classes hold greater significance.

I implement a class named "AutoTags" for this purpose. This class is designed to initialize the relevant model, preprocess the input image for detection, and subsequently generate and store tags based on the output classes, which are then released as the final results.